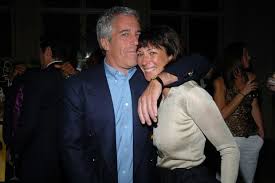

In a surprising move, Ghislaine Maxwell, the British heiress and confidante to the late financier Jeffrey Epstein, has been arrested in New Hampshire. Maxwell’s arrest could have a ripple effect on both criminal and civil matters ranging from the still uncertain status of Prince Andrew to a number of defamation lawsuits. One of Maxwell’s principal accuser was Virginia Roberts Giuffre who has filed lawsuits against Maxwell as well as figures like Harvard Professor Alan Dershowitz. It appears that the charges derive from the U.S. Attorney for the Southern District of New York, another indication that the recent controversy of the replacement of the U.S. Attorney has not impacted underlying investigations.

Frankly, as a criminal defense lawyer, I am surprised that Maxwell risked returning to the United States. She was believed to be living in Paris. It was well-known that the Justice Department was pursuing the case, including demands to interview Prince Andrew.

Her arrest may be unnerving for figures like Prince Andrew. She would be the ultimate cooperating witness if she decided to cooperate on broader criminal inquiries. Giuffre and others have alleged that she was the primary procurer of young girls for Epstein to abuse.

Such prosecutions are not easy given the passage of time. However, the government cle

arly has live witnesses like Giuffre who might have a significant impact on a jury. The government would have to show more than her mere presence at these homes or parties.

She is currently facing six counts tied to her alleged assistance to Epstein in finding girls as young as 14 years old for sexual acts back as far as 1994.

“In some instances, Maxwell was present for and participated in the sexual abuse of minor victims,” the indictment says.

One interesting twist will be the infamous deal cut. I was a vocal critic of that deal with Epstein. Despite a strong case for prosecution, Epstein’s lawyers, including Alan Dershowitz and Ken Starr, were able to secure a ridiculous deal with prosecutors. He was accused of abusing abused more than forty minor girls (with many between the ages of 13 and 17). Epstein pleaded guilty to a Florida state charge of felony solicitation of underage girls in 2008 and served a 13-month jail sentence. Epstein was facing a 53-page indictment that could have resulted in life in prison in jail. However, he got the 13 month deal. Moreover, to my lasting surprise, the Senate approved the man who cut that disgraceful deal, former Miami U.S. attorney Alexander Acosta, as labor secretary. He later resigned.

That deal does not mention Maxwell but it expressly stated that federal prosecutors “will not institute any criminal charges against any potential co-conspirators of Epstein, including but not limited to Sarah Kellen, Adriana Ross, Lesley Groff, or Nadia Marcinkova.” Any criminal charge linked to the Epstein case against Maxwell would seem to be a charge against a “potential co-conspirator of Epstein.”

That deal however was not lawfully executed by Acosta who did a great disservice not only to justice but more importantly these victims. Judge Kenneth A. Marra of Federal District Court in West Palm Beach found, as many of us have long argued, that the deal cut by Acosta violated federal law and allowed the infamous financier to get a disgracefully low sentence. Here is the opinion.

Thus, the improper role played by the Justice Department in the case may actually help it now with any prosecution of Maxwell.

Update:

Any problem with the Acosta deal made be avoided by not just attacking the validity of the agreement, as noted, but reliance on perjury counts stemming from depositions (which would not be impacted by the agreement even if enforceable). Here are the charges:

Count One: Enticement of a Minor To Travel To Engage In Illegal Sex Acts

Count Two: Enticement of a Minor To Travel To Engage In Illegal Sex Acts

Count Three: Transportation Of A Minor With Intent To Engage In Criminal Sexual Activity

Count Four: Transportation Of A Minor With Intent To Engage In Criminal Sexual Activity

Count Five: Perjury

Count Six: Perjury

Here is the indictment: U.S. v. Ghislaine Maxwell

https://www.winterwatch.net/2020/07/the-ghislaine-maxwell-deception/

“Believe nothing about this arrest saga. Where’s Jizz? Has anybody actually seen her? Conveniently, a new law passed in April of this year in New York banned the release of mugshots and ended perp walks. What a cowinkydink. ”

https://twitter.com/LionelMedia/status/1279021603410653185

Lionel@LionelMedia

Believe absolutely nothing about the #GhislaineMaxwell arrest saga. Where’s Jizz? Has anybody actually seen her? Have you seen a mugshot? Do not believe for a moment this secluded NH compound scheiße story. She holds the key to destroying the [DS]. #GhislaineDidntKillHerself

“Fake News: Chief Justice John Roberts Did NOT Fly With Jeffrey Epstein On At Least Two Occasions”

https://leadstories.com/hoax-alert/2019/08/fake-news-john-roberts-not-fly-with-epstein-on-at-least-2-occasions.html

It is fake news (negative) that he did not (negative) travel on the lolita express two times.

So it is real news that he did travel two times. Or someone with the same name did. Or something like that.

Obviously they managed to somehow nullify her dead mans switch, or they never would have arrested her, as the FBI has had multiple opportunities to do so over the past 11 months, but they left her alone.

Or she still has a dead mans switch, and cut some sort of deal with the Feds before coming back to the US to be willfully arrested.

If it is the former, then she is as good as dead.

Swamp prosecutor gets canned and “blow the lid off the pedophile swamp” defendant gets arrested. Correlation, coincidence or causation? I don’t care. Who is that “John Roberts” character on the flight log of the Lolita Express!

A one “John Roberts.”

Ouch!

R.I.P.

Some high profile people must be shaking in their boots.

At a guess, The Great Showman is going to present the Greatest October Surprise of which this will be but one act.

Anonymous disappeared for the 3rd time…

Best to take it up with Jonathan Turley, himself, so that he knows what’s going on. He should see a sampling of the comments that are being deleted. Some might be offensive, but there are equally offensive comments that aren’t deleted. Some have free rein to disparage others, but dishing it back isn’t allowed?

Who’s deleting comments? Darren, Jonathan Turley, Mespo? Others?

Free speech. LOL.

Anonymous – if comments are being deleted it is probably Darren, then JT, then one of his sons has access to the blog, but I do I do not know if he has deletion rights.

Anonymous:

Delete you? I’d put your foolishness up on a billboard on I95 so others could see it if I could. Truth in advertising you know. Now if you just had the guts to add your real name (like a man) or a snappy pseudonym (like a bright woman). How’s Run Tell Mommy Puse’ work?

Ah, the “vaunted” Southern District of New York gets another key person in the puzzle into custody. What is the over/under on the number of days she has left? Too many people (such as Bill Clinton) have too much exposure. She’ll be gone soon. The Justice Dept. is mostly Democrats. Adios, girlfriend!

It is wildly implausible that Alex Acosta acted alone when he approved Epstein’s original plea deal. Surely a deal with someone as influential and well connected as Epstein, and for such a lurid crime, would have been known to Acosta’s superiors. It didn’t draw public attention until Acosta became Labor Secretary some 10 years later, and yet Acosta took the fall. There’s something fishy going on.

Wonder how Bill Clinton and Prince Andrew will sleep tonight?

EDKH – One of the guys on The Blaze said the phones are hot today. There are 40 seal indictments waiting to be opened.

Indictments on this? The Durham investigation? Or something else?

EDKH – supposedly on the Epstein matter. I have heard that before.

A book came out a couple weeks ago claiming that Bill was a regular on the Lolita Express because he was doing the nasty with Ghislaine, not the little ones. A little well-timed air cover for the big man? Could have a big effect on the over/under on the Ghislaine pool.

EDKH – there are a lot of people who would want her dead. Besides, how many people have written cover stories for the Clintons?

And it was also reported Ghislaine would join in while Epstein was having his time with the under aged ladies. Do you think there is a chance Ghislaine and Clinton included a young one also ?

J – YES

The plea deal was concluded in the interval between Alberto Gonzalez departure and the time Michael Mukasey took office. The chief of the Criminal Division at the time was a fairly young woman named Alice Fisher. She is now a partner at Latham & Watkins.

There is a timeline in the Miami Herald showing that the negotiation and implementation of the NPA and plea deal straddled about a year in 2007-2008, on either side of both Gonzalez and Mukasey. It is well documented that there were a number of discussions between Acosta, Epstein’s attorneys and Washington DOJ during this time. Acosta wasn’t acting in a vacuum. I’ve always found the lack of curiosity into what actually happened to be astounding.

No, the salient date fell in the interval between Gonzales and Mukasey.

I would love to know the full story.

Very well on a bed made of money.

Alone

I think Maxwell does not get bail because she is a flight risk. People born in France as she was cannot be extradicted and she has a home there. She also has lots of friends with private jets. If she is locked up for the duration and this trial doubtless will take a while, I think she cracks. I don’t know what prompted her to travel to the USA but my guess is it had something to do with thinking she was safe as long as Geoffrey Berman was acting DA in SDNY.

Peter Berg – one of the guys on The Blaze was saying he didn’t think she would last the night. Her fear should be the Royal Family.

I don’t think the Queen has a death squad at her disposal.

DSS – they have MI5 and MI6

Who killed Diana?

Henri Paul.

You think wrong.

I understand Maxwell will be held at the Metropolitan Correctional Center where Jeffery Epstein died. (Not a joke) How long before Maxwell commits “suicide”?

Robert Nova – We have a pool going. It is open to everyone. Just send me your date of anticipated “Arkancide.”

ah, little question guys., probably doesnt matter. but is that Former FBI Director Comey’s daughter on the prosecution team?

https://www.justice.gov/usao-sdny/pr/ghislaine-maxwell-charged-manhattan-federal-court-conspiring-jeffrey-epstein-sexually

“This case is being handled by the Office’s Public Corruption Unit. Assistant U.S. Attorneys Alex Rossmiller, Alison Moe, and Maurene Comey are in charge of the prosecution.”

here’s another question.

did Trump & AG Barr firing the malingering AUSA at SDNY last week, have anything to do with knocking this case loose and making it happen?

The DA Berman gets fired and less than two weeks later the Democrats introduce an impeachment resolution in the House and two days later we finally get movement on this case. Sure that is just coincidence. People and their wacky conspiracy theories! Bill Barr is the deep states worst nightmare, but I am afraid they will run the clock on him and both he and Trump will have to retire to Hungary to escape prosecution by the Biden administration.

Zerohedge posted that one of the reasons Trump fired Berman is because the DOJ gave him piles of evidence Re. Biden’s crimes Re. Ukraine. Instead of furthering the investigation, Berman sat on it. Finally the current Ukraine government started releasing evidence incriminating Biden; much more coming. The DNC already circles the wagons, writing the appropriate response narrative.

Trump is a major doofus, but I’d still take Donny a million times over a Demonkrap.

She will skate if Trump loses election, or, state that Trump was heavily involved when he wasn’t, just to have an investigation.

thank you, Jeff Sessions, for starting this. Thank you, Tom Fitton and Attorney Durham for following up. Where is Bill Barr?

Thank you Tom Fitton? No. Thank you Larry Klayman.

Freedom Watch and Klayman File Class Action Suit vs. China Over COVID-19

OVER $20 TRILLION IN DAMAGES DEMANDED

Jury Trial Requested in Texas Federal Court

DALLAS, March 19, 2020 /PRNewswire/ — Today Larry Klayman, the founder of both Judicial Watch and now Freedom Watch and a former federal prosecutor, announced the filing of a class action complaint in the U.S. District Court for the Northern District of Texas (20-cv-656) for damage caused by China related to the COVID-19 virus.

“Dead Woman Walking.”

Like Vince Foster, she knows all about the skeletons in the closet and where the bodies are buried.

Bill has already called the Arkansas mafia.

One call does it all.

https://www.youtube.com/watch?v=whQQpwwvSh4

AC DC DIRTY DEEDS yeah

Another Arkancide would be such a coincidence. I expect she is going to have Covid 19, a fatal case of it and die on the respirator. Dr. Fauci can oversee the settings.

This would be far more plausible. Who could argue with that?

Remember that is what happened to that Chinese doctor after he spilled the beans on corona.

He was healthy, young and knew how to protect himself. Dying was just very convenient for the CCP.

Vincent Foster committed suicide.